Model Overview

Model Overview

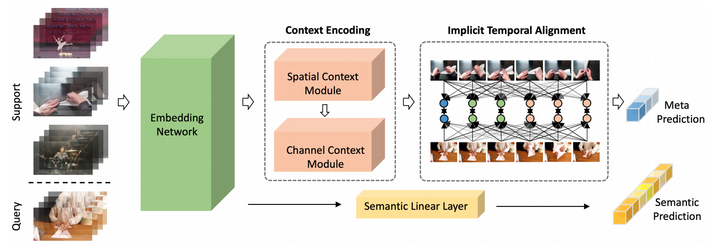

Abstract

Few-shot video classification aims to learn new video categories with only a few labeled examples, alleviating the burden of costly annotation in realworld applications. However, it is particularly challenging to learn a class-invariant spatial-temporal representation in such a setting. To address this, we propose a novel matching-based few-shot learning strategy for video sequences in this work. Our main idea is to introduce an implicit temporal alignment for a video pair, capable of estimating the similarity between them in an accurate and robust manner. Moreover, we design an effective context encoding module to incorporate spatial and feature channel context, resulting in better modeling of intra-class variations. To train our model, we develop a multi-task loss for learning video matching, leading to video features with better generalization. Extensive experimental results on two challenging benchmarks, show that our method outperforms the prior arts with a sizable margin on SomethingSomething-V2 and competitive results on Kinetics.