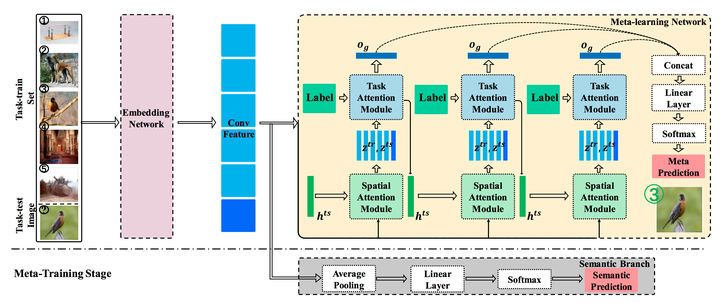

An illustration of few-shot classification via our attention-based network. Image Credit: Songyang Zhang

An illustration of few-shot classification via our attention-based network. Image Credit: Songyang Zhang

Abstract

Despite recent success of deep neural networks, it remains challenging to efficiently learn new visual concepts from limited training data. To address this problem, a prevailing strategy is to build a meta-learner that learns prior knowledge on learning from a small set of annotated data. However, most of existing meta-learning approaches rely on a global representation of images and a meta-learner with complex model structures, which are sensitive to background clutter and difficult to interpret. We propose a novel meta-learning method for few-shot classification based on two simple attention mechanisms: one is a spatial attention to localize relevant object regions and the other is a task attention to select similar training data for label prediction. We implement our method via a dual-attention network and design a semantic-aware meta-learning loss to train the meta-learner network in an end-to-end manner. We validate our model on three few-shot image classification datasets with extensive ablative study, and our approach shows competitive performances over these datasets with fewer parameters. For facilitating the future research, code and data split are available:https://github.com/tonysy/STANet-PyTorch